Known Issues in Cloudera Navigator 6.1.0

The following known issues and limitations from a previous release affect the data management components of Cloudera Navigator 6.1.0:

Authentication and Authorization

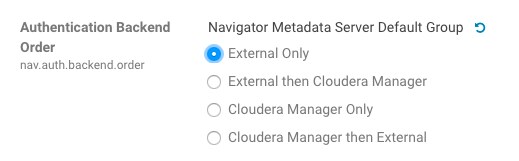

SAML authentication fails with "Cloudera Manager Only" setting

With the following combination of Cloudera Manager configuration properties set, authentication to Navigator fails:

- Authentication Backend Order: Cloudera Manager Only

- External Authentication Type: SAML

Workaround: To configure Navigator for SAML authentication, use an Authentication Backend Order other than "Cloudera Manager Only".

Affected Versions: Cloudera Navigator 6.0.0 and later

Fixed Versions: N/A

Cloudera Issue: NAV-6211

Errors when using local login returns the browser to SAML login page

With SAML authentication enabled for Navigator, administrators are allowed to use locallogin.html to login with local credentials instead of SAML. However if the administrator enters a wrong username or password, the page is redirected to login.html?error=true.

When that happens, the login.html URL is no longer a local login and the login.html page address gets redirected to the IDP address for SAML authentication.

Workaround: After the login failure, the URL changes to something similar to:

https://hostname:7187/login.html?error=true

To return to the local login page, change the browser address to a URL similar to:

https://hostname:7187/locallogin.html

Affected Versions: Cloudera Navigator 6.0.0 and later

Fixed Versions: N/A

Cloudera Issue: NAV-5824

Cloudera Manager Configuration

Adding a blank audit filter removes filter configuration property

In Cloudera Manager, when adding an empty rule to a service's Audit Event Filter and then saving the change, all existing audit event filters are lost. The filter configuration property is removed from Cloudera Manager's list of configuration properties. Reverting the change in the History and Rollback does not restore the previous filters nor reproduce the filter property.

Affected Versions: Cloudera Navigator 6.0.0 and later

Fixed Versions: Cloudera Navigator 6.2.1, 6.3.1

Cloudera Issue: NAV-6096

Overriding safety valve settings disables audit and lineage features

Customers or third party applications such as Unravel may require that hive.exec.post.hooks is configured in a HiveServer2 safety valve. Cloudera Manager will comment out the hive.exec.post.hooks value that is configured if audit or lineage is enabled for Hive. The safety valve content shows the commented code:

<!--'hive.exec.post.hooks', originally set to 'com.cloudera.navigator.audit.hive.HiveExecHookContext,org.apache.hadoop.hive.ql.hooks.LineageLogger' (non-final), is overridden below by a safety valve-->

This automated change disables Navigator's auditing and lineage features without notification.

At this time, there is no workaround.

Affected Versions: Cloudera Navigator 6.0.0 and later

Fixed Versions: N/A

Cloudera Issue: NAV-5331

Hive, Hue, Impala

Overriding safety valve settings disables audit and lineage features

Customers or third party applications such as Unravel may require that hive.exec.post.hooks is configured in a HiveServer2 safety valve. Cloudera Manager will comment out the hive.exec.post.hooks value that is configured if audit or lineage is enabled for Hive. The safety valve content shows the commented code:

<!--'hive.exec.post.hooks', originally set to 'com.cloudera.navigator.audit.hive.HiveExecHookContext,org.apache.hadoop.hive.ql.hooks.LineageLogger' (non-final), is overridden below by a safety valve-->

This automated change disables Navigator's auditing and lineage features without notification.

Affected Versions: Cloudera Navigator 6.0.0 and later

Workaround: To fix this problem, manually merge the original HiveServer2 safety valve content for hive.exec.post.hooks with the new value. For example, in the case of Unravel, the new safety valve would look like the following:

<property> <name>hive.exec.post.hooks</name> <value>com.unraveldata.dataflow.hive.hook.HivePostHook,com.cloudera.navigator.audit.hive.HiveExecHookContext,org.apache.hadoop.hive.ql.hooks.LineageLogger</value> <description>for Unravel, from unraveldata.com</description> </property>

Cloudera Issue: NAV-5331

Lineage not generated for Pig operations on Hive tables using HCatalog loader

When accessing a Hive table using Pig, lineage is generated in Navigator when using physical file loads, such as:

A = LOAD '/user/hive/warehouse/navigator_demo.db/salesdata';

B = LIMIT A 16;

STORE B INTO '/user/hive/warehouse/navigator_demo.db/salesdata_sample_file' using PigStorage (';');

However, when accessing the Hive table using the HCatalog load, lineage for the Pig operation is not generated when browsing the source table lineage. Such as:

A = LOAD 'navigator_demo.salesdata' using org.apache.hive.hcatalog.pig.HCatLoader(); B = LIMIT A 16; STORE B INTO 'navigator_demo.salesdata_sample_hcatalog' using org.apache.hive.hcatalog.pig.HCatStorer();

Affected Versions: Cloudera Navigator 6.0.0 and later

Fixed Versions: N/A

Cloudera Issue: NAV-3411

Impala lineage delay when running queries from Hue

When using Hue to perform Impala queries, after running the query, the lineage doesn't show up in Navigator until Impala determines that the query is complete. Hue gives users the opportunity to pull another set of results on the same query, so Impala holds the query open. Lineage metadata is sent after Impala reaches its configured query timeout or an event such as another query or logging out of Hue occurs.

Workaround: Set low timeouts for queries in Hue or add an Impala query timeout specifically to the Hue safety valve and set the timeout for 3-5 minutes so that you see the queries show up in Navigator after Hue is idle for some time. Hue will notify users that the query needs to be run again, but it also releases the query resources. Here are the options:

- Safety Valve for hue_safey_valve_server.ini

[impala] session_timeout_s=300 query_timeout_s=300

- Impala timeouts

See Setting the Idle Query and Idle Session Timeouts for impalad.

- Hue session settings

You can set or override the Hue and Impala default settings with session settings in the Impala Editor as described in IDLE_SESSION_TIMEOUT Query Option and QUERY_TIMEOUT_S Query Option. Note that these settings must be reset per session.

HiveServer1 and Hive CLI support removed

Cloudera Navigator requires HiveServer2 for complete governance Hive queries. Cloudera Navigator does not capture audit events for queries that are run on HiveServer1/Hive CLI, and lineage is not captured for certain types of operations that are run on HiveServer1.

If you use Cloudera Navigator to capture auditing, lineage, and metadata for Hive operations, upgrade to HiveServer2 if you have not done so already.

Affected Versions: Cloudera Navigator 6.x

Fixed Versions: N/A

Cloudera Issue: TSB-185

Streaming Audit Events

Error blocks second streaming target

When streaming audit messages to both Flume and Kafka, if the Flume client throws an exception, Navigator Audit Server does not send the same messages to Kafka. To recover from this problem, the Flume client needs to be working.

Affected Versions: Cloudera Navigator 6.x

Fixed Versions: N/A

Cloudera Issue: NAV-7143

Purge

Navigator Metadata Server purge jobs may not run if there are policies configured

Navigator Metadata Server purge can produce messages such as "Checking if maintenance is running" and then fail to run during the available window. This problem occurs when a scheduled purge job waits for extraction tasks to finish but while waiting, a policy job starts, preventing the purge job from running.

Workaround: Specifically, to stop policies from running when you are trying to ensure that purge jobs will run, you can delete policies or temporarily change them to run infrequently to give the purge job time to run. When purge jobs have caught up to their backlog of work, you can change the policies back to running more frequently. Note that simply disabling the policies is not sufficient.

More generally, if you find that scheduled purge jobs are not running because there are other Navigator tasks in progress, consider stopping Navigator extractors and setting policies to run much less frequently. Then manually run the metadata purge using an API call to match your Navigator version:

curl -X POST -u user:password "https://navigator_host:7187/api/vXX/maintenance/purge?deleteTimeThresholdMinutes=duration"

API versions correspond to Navigator versions as described Mapping API Versions to Product Versions.

Affected Versions: Cloudera Navigator 6.0.0, 6.0.1, 6.1.0, 6.1.1

Fixed Versions: Cloudera Navigator 6.2.0

Cloudera Issue: NAV-7037

First purge job may run twice

Navigator purge jobs are scheduled using UTC. However, the first time Navigator runs a purge, the scheduler triggers the job twice, once in UTC timezone and a second time one in local timezone. After that the schedule is triggered as expected. Other than the first purge running at an unexpected time, there are no side-effects of this issue.

Affected Versions: Cloudera Navigator 6.0.0 and later

Fixed Versions: N/A

Cloudera Issue: NAV-6666

Purge can create data that's too big for Solr to process

Solr's POST request payload is set to 2MB, which can be exceeded when purging a large Navigator metadata storage directory. The purge job fails with an error similar to the following:

2018-05-31 02:42:23,959 ERROR com.cloudera.nav.maintenance.purge.hiveandimpala.PurgeHiveOrImpalaSelectOperations [scheduler_Worker-1]:

Failed to purge operations for DELETE_HIVE_AND_IMPALA_SELECT_OPERATIONS with error

org.apache.solr.client.solrj.impl.HttpSolrServer$RemoteSolrException:

Expected mime type application/octet-stream but got application/xml.

To work-around this problem, set the following options in the Navigator Metadata Server Advanced Configuration Snippet (Safety Valve) for cloudera-navigator.properties in Cloudera Manager:

nav.solr.commit_batch_size=50000 nav.solr.batch_size=50000

Restart Navigator Metadata Server. Leave these options in place until a more than one purge job has run successfully, then remove the options and restart Navigator Metadata Server.

Affected Versions: Cloudera Navigator 6.0.0 and later

Fixed Versions: N/A

Cloudera Issue: NAV-6452

Policy specifications and cluster names affect purge

Policies cannot use cluster names in queries. Cluster name is a derived attribute and cannot be used as-is.

Workaround: When setting move actions for Cloudera Navigator, if there is only one cluster known to the Navigator instance, remove the clusterName clause.

curl '<nav-url>/api/v9/entities/?query=type%3Asource&limit=100&offset=0'Use the identity of the matching HDFS service for this cluster as the sourceId.

Affected Versions: Cloudera Navigator 6.0.0 and later

Fixed Versions: N/A

Cloudera Issue: NAV-3537

Spark

Spark Lineage Limitations and Requirements

- Lineage is produced only for data that is read/written and processed using the Dataframe and SparkSQL APIs. Lineage is not available for data that is read/written or processed using Spark's RDD APIs.

- Lineage information is not produced for calls to aggregation functions such as groupBy().

- The default lineage directory for Spark on Yarn is /var/log/spark/lineage. No process or user should write files to this directory—doing so can cause agent failures. In addition, changing the Spark on Yarn lineage directory has no effect: the default remains /var/log/spark/lineage.

Navigator doesn't recognize local files in Spark jobs

Spark jobs can use files on the local filesystem as job inputs or outputs. Navigator, however, only supports HFDS, Hive, and S3 assets as job inputs or outputs. When Navigator extracts metadata from Spark and encounters a local source type, the metadata is discarded and the following error appears in the Navigator Metadata Server log:

2018-10-11 12:14:26,192 WARN com.cloudera.nav.api.ApiExceptionMapper [qtp1574898980-23815]: Unexpected exception.

java.lang.RuntimeException: Source LOCAL isn't supported for Spark Lineage

Affected Versions: Cloudera Navigator 6.0.0, 6.0.1, 6.1.0, 6.1.1

Fixed Versions: Cloudera Navigator 6.2.0

Cloudera Issue: NAV-6811

Spark extractor enabled using safety valve deprecated

The Spark extractor included prior to CDH 5.11 and enabled by setting the safety valve, nav.spark.extraction.enable=true is being deprecated, and could be removed completely in a future release. If you are upgrading from CDH 5.10 or earlier and were using the extractor configured with this safety valve, be sure to remove the setting when you upgrade.

Upgrade Issues and Limitations

Upgrading Cloudera Navigator from Cloudera Manager 5.9 or Earlier Can be Extremely Slow

Upgrading a cluster running Cloudera Navigator to Cloudera Manager 5.10 (or higher) can be extremely slow due to an internal change made to the Solr schema in Cloudera Navigator 2.9. A Solr instance is embedded in Cloudera Navigator and supports its search capabilities. The Solr schema used by Cloudera Navigator has been modified in the 2.9 release to use datatype long rather than string for an internal id field. This change makes Cloudera Navigator far more robust and scalable over the long term.

| Metadata and lineage usage | Description |

|---|---|

| None | Deployments that use Cloudera Navigator audit capability only—without metadata or lineage—do not have the issue. Backup the Navigator Metadata Server storage directory and then delete it before upgrading. |

| storage directory < 60 GB | Deployments with relatively small Navigator Metadata Server data directories may take 1 to 2 days for the upgrade process to complete. See the workaround below for steps to take before upgrading to Cloudera Manager 5.10 to possibly reduce the upgrade time. |

| storage directory > 60 GB | Deployments with relatively large Navigator Metadata Server data directories may take several days for the upgrade process to complete. See the workaround below for steps to take before upgrading to Cloudera Manager 5.10 to possibly reduce the upgrade time. |

Workaround: To reduce the time required for upgrading the Navigator Metadata Server data directories for deployments currently running Cloudera Navigator 2.8 that uses its metadata and lineage features, consider removing unneeded entries from the metadata before the upgrade. The Navigator Purge feature allows you to remove metadata for deleted entities and for entities and operations older than a specified date. For more information on what metadata you can remove with Purge, see Managing Metadata Storage with Purge.

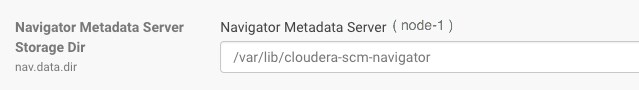

- Check the Navigator Metadata Server storage directory size. The path is /var/lib/cloudera-scm-navigator (default) unless configured otherwise. If you

need to check the setting:

- Log in to Cloudera Manager Admin Console.

- Select .

- Click Configuration and then click the Navigator Metadata Server Scope filter:

- Confirm that the cluster uses the default configuration, or make a note of the location specified and the node name.

- Check the size of the actual directory contents. The following example shows a freshly installed system and so it is virtually empty.

[root@node-1 ~]# cd /var/lib/cloudera-scm-navigator [root@node-1 cloudera-scm-navigator]# ls -l total 12 drwxr-x--- 2 cloudera-scm cloudera-scm 113 Jul 12 06:56 diagnosticData drwxr-x--- 2 cloudera-scm cloudera-scm 4096 Jul 12 09:16 extractorState -rw-r----- 1 cloudera-scm cloudera-scm 36 Jul 12 05:54 instance.uuid drwxr-x--- 4 cloudera-scm cloudera-scm 60 Jul 12 04:18 solr drwxr-x--- 7 cloudera-scm cloudera-scm 4096 Jul 12 07:26 temp [root@node-1 cloudera-scm-navigator]# cd solr [root@node-1 solr]# ls -l total 4 drwxr-x--- 4 cloudera-scm cloudera-scm 28 Jul 12 05:56 nav_elements drwxr-x--- 4 cloudera-scm cloudera-scm 28 Jul 12 05:56 nav_relations -rw-r----- 1 cloudera-scm cloudera-scm 450 Jul 6 16:13 solr.xml [root@node-1 solr]#

- Back up the contents of the directory. Use Cloudera Manager BDR or your preferred method.

- Schedule a purge process as described in Scheduling the Purge Process.

Set options to purge metadata for deleted HDFS entities and any operations.

- Check the storage directory size again. If needed, re-run the purge with a shorter time span to retain metadata. If the storage directory consumption cannot be reduced below 60GB, do not start the Cloudera Manager upgrade. Instead, contact Cloudera support to help you with this upgrade.

Affected Versions: Cloudera Navigator 6.x

Fixed Versions: N/A

Cloudera Issue: NAV-5046