Creating Highly Available Clusters With Altus Director

Limitations and Restrictions

- The procedure described in this section works with Altus Director 2.0 or higher and Cloudera Manager 5.5 or higher.

- Altus Director does not support migrating a cluster from a non-high availability setup to a high availability setup.

- Cloudera recommends sizing the master nodes large enough to support the desired final cluster size.

- Settings must comply with the configuration requirements described below and in the aws.ha.reference.conf file. Incorrect configurations can result in failures during initial bootstrap.

Editing the Configuration File to Launch a Highly Available Cluster

- Download the sample configuration file aws.ha.reference.conf from the Cloudera GitHub site. The cluster section of the file shows the role assignments and required configurations for the services where high availability is supported. The file includes comments that explain the configurations and requirements.

- Copy the sample file to your home directory before editing it. Rename the aws.ha.reference.conf file, for example, to ha.cluster.conf. The configuration file must use the .conf file extension. Open the configuration file with a text editor.

- Edit the file to supply your cloud provider credentials and other details about the cluster. A highly available cluster has additional requirements, as seen in the sample aws.ha.reference.conf file. These requirements include duplicating the master roles for highly available services.

The sample configuration file includes a set of instance groups for the services where high availability is supported. An instance group specifies the set of roles that are installed together on an instance in the cluster. The master roles in the sample aws.ha.reference.conf file are included in four instance groups, each containing particular roles. The names of the instance groups are arbitrary, but the names used in the sample file are hdfsmasters-1, hdfsmasters-2, masters-1, and masters-2. You can create multiple instances in the cluster by setting the value of the count field for the instance group. The sample file is configured for two hdfsmasters-1 instances, one hdfsmasters-2 instance, two masters-1 instances, and one masters-2 instance.

- HDFS

- Two NAMENODE roles.

- Three JOURNALNODE roles.

- Two FAILOVERCONTROLLER roles, each colocated to run on the same host as one of the NAMENODE roles (that is, included in the same instance group).

- One HTTPFS role if the cluster contains a Hue service.

- The NAMENODE nameservice, autofailover, and quorum journal name must be configured for high availability exactly as shown in the sample aws.ha.reference.conf file.

- Set the HDFS service-level configuration for fencing as shown in the sample aws.ha.reference.conf file:

configs { # HDFS fencing should be set to true for HA configurations HDFS { dfs_ha_fencing_methods: "shell(true)" } - Three role instances are required for the HDFS JOURNALNODE role. This ensures a quorum for determining which is the active node and which are standbys.

For more information, see HDFS High Availability in the Cloudera Administration documentation.

- YARN

- Two RESOURCEMANAGER roles.

- One JOBHISTORY role.

For more information, see YARN (MRv2) ResourceManager High Availability in the Cloudera Administration documentation.

- ZooKeeper

- Three SERVER roles (recommended). There must be an odd number, but one will not provide high availability

- Three role instances are required for the ZooKeeper SERVER role. This ensures a quorum for determining which is the active node and which are standbys.

- HBase

- Two MASTER roles.

For more information, see HBase High Availability in the Cloudera Administration documentation.

- Hive

- Two HIVESERVER2 roles.

- Two HIVEMETASTORE roles.

For more information, see Hive Metastore High Availability in the Cloudera Administration documentation.

- Hue

- Two HUESERVER roles.

- One HTTPFS role for the HDFS service.

- One HUE_LOAD_BALANCER role

For more information, see Hue High Availability in the Cloudera Administration documentation.

- Oozie

- Two SERVER roles.

- Oozie plug-ins must be configured for high availability exactly as shown in the sample aws.ha.reference.conf file. In addition to the required Oozie plug-ins, other Oozie plug-ins can be enabled. All Oozie plug-ins must be configured for high availability.

- Oozie requires a load balancer for high availability. Altus Director does not create or manage the load balancer. The load balancer must be configured with the IP addresses of the Oozie servers after the cluster completes bootstrapping.

For more information, see Oozie High Availability in the Cloudera Administration documentation.

- The following requirements apply to databases for your cluster:

- You can configure external databases for use by the services in your cluster and for Altus Director. If no databases are specified in the configuration file, an embedded PostgreSQL database is used.

- External databases can be set up by Altus Director, or you can configure preexisting external databases to be used. Databases set up by Altus Director are specified in the databaseTemplates block of the configuration file. Preexisting databases are specified in the databases block of the configuration file. External databases for the cluster must be either all preexisting databases or all databases set up by Altus Director; a combination of these is not supported.

- Hue, Oozie, and the Hive metastore each require a database.

- Databases for highly available Hue, Oozie, and Hive services must themselves be highly available. An Amazon RDS MySQL Multi-AZ deployment, whether preexisting or configured to be created by Altus Director, satisfies this requirement.

Migrating HDFS Master Roles

- Migrating roles to a different instance: Cloudera recommends using this method because it gives you the most options:

- It allows you to move the master roles from one instance to another without necessarily removing the instance that you are migrating from.

- It allows you to make changes to the instance configuration, such as the AMI, security group, or instance type, rather than requiring you to move the master roles to an instance with a configuration identical to the one being replaced.

- Replacing a HDFS master instance with an identical copy: With this method, you replace the original instance with the new instance, creating an identical copy without changes to the instance configuration. At the end of the procedure, the original instance is deleted.

With either method, you will perform a process that is partly manual, and requires migration of the roles in Cloudera Manager. If a host running HDFS master roles fails in a highly available cluster, you can use Altus Director and the Cloudera Manager Role Migration wizard to move the roles to another host without losing the role states. What was previously the standby instance of each migrated role runs as the active instance. When the migration is completed, the role that runs on the new host becomes the standby instance.

The procedure for each method is described in the following sections.

Migrating Roles to a Different Instance

To migrate master roles from one instance to another, follow the steps below:

Step 1: Preparation

- Back up the Altus Director database.

- Check the logs to ensure that the cluster refresh is working properly, since cluster refresh is required for this procedure. The cluster refresh process needs access to both Cloudera Manager and the cloud provider. Misconfiguration that prevents this access might appear in the log file. Look for log messages that contain ClusterRefresher and RefreshClusters to ensure that no warnings or errors appear. Altus Director server logs are located at /var/log/cloudera-director-server/application.log on the Altus Director instance.

Step 2: Add instances (without roles) to the cluster in Altus Director

- Create a new instance group for each set of roles you will place on new instances in Step 3: Migrate roles

to the new instances in Cloudera Manager. Typically, for HDFS master roles you will create three instances for the HDFS master roles, in two instance groups:

- Two of the instances will include all three HDFS master roles (NameNode role, Failover Controller role, and JournalNode role). Create one instance group for these three roles.

- The third instance will include the required third copy of the JournalNode role. Create a second instance group for this JournalNode role.

- In Altus Director, add the required number of new instances (the number of instances you are replacing), but do not assign roles to them. Altus Director installs the Cloudera Manager agent on these instances, but no roles, so that they are available as hosts for Cloudera Manager to use in the next step, Step 3: Migrate roles to the new instances in Cloudera Manager. For information about how to add instances in Altus Director, see Modifying the Number of Instances in an Existing Cluster.

- If you want to change the configuration of the new master instances, create new instance templates in the environment that includes your cluster. Configure these instance templates with the desired configurations for the instances you are replacing. If you want the new instances to be identical to the ones you are replacing, you can use the instance templates that were used to create the original instances.

Step 3: Migrate roles to the new instances in Cloudera Manager

In Cloudera Manager, migrate roles to the new instances. Refer to the Cloudera Manager and CDH documentation for instructions on migrating high availability master role instances.

Step 4: Wait for the Altus Director refresh to pick up the new role assignments

The Altus Director refresh runs approximately every five minutes. You can monitor the log file for the log messages for ClusterRefresher and RefreshClusters to see when this occurs.

Verify that the high availability master roles are as expected in the Altus Director UI. The old instances should contain no roles.

Step 5: Terminate old instances in Altus Director

In Altus Director, terminate the old instances. For information on terminating instances in Altus Director, see Modifying the Number of Instances in an Existing Cluster.

Replacing a HDFS Master Instance with an Identical Copy

Use this method to replace instances with an exact copy on the new instance. If you want to make changes in the instance or its configuration, for example, to use a different instance type or an instance with more memory, use the workflow described above, Migrating Roles to a Different Instance.

- You cannot modify any instance groups on the cluster during the repair and role migration process.

- You cannot clone the cluster during the repair and role migration process.

- During role migration with this method, you cannot perform Altus Director operations on the cluster, such as growing or shrinking it.

- Instance-level post-creation scripts will not be run on any instances that are part of a manual migration. If you have instance-level post-creation scripts and want them to run during manual migration, run the scripts manually.

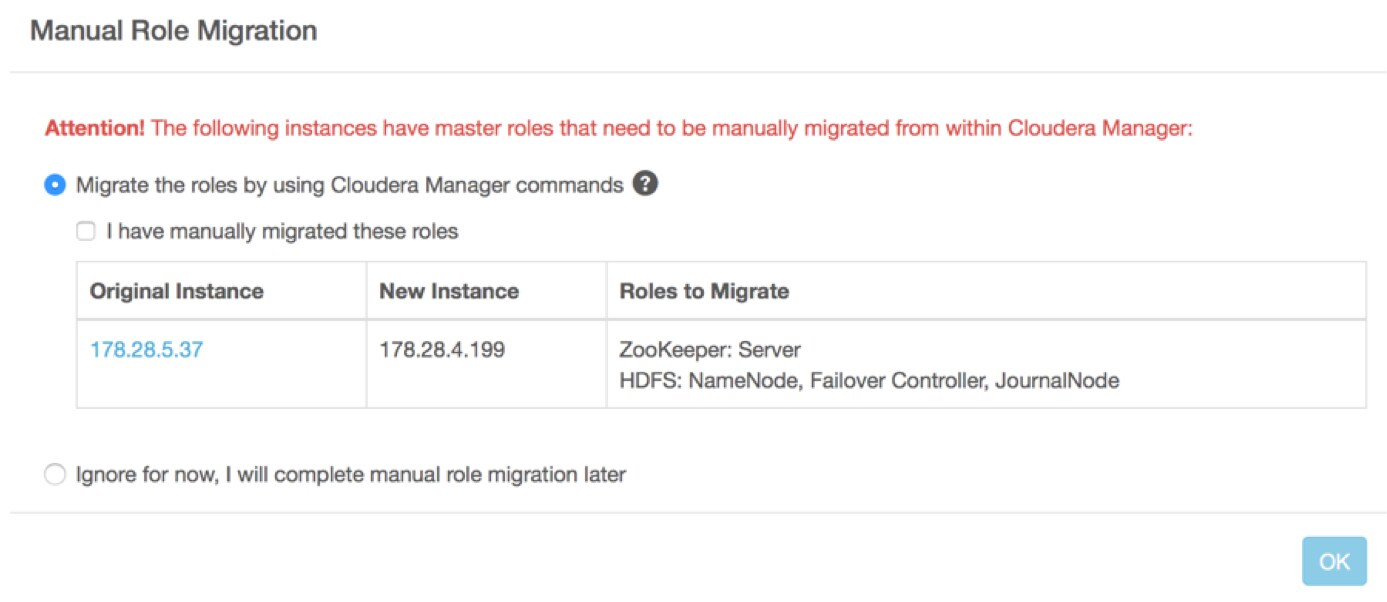

If you have completed Step 1 (on Altus Director) and intend to complete Step 2 (on Cloudera Manager) at a later time, you can confirm which IP address to migrate from or to by going to

the cluster status page in Altus Director and clicking either the link for migration in the upper left, or the Modify Cluster button on the right. A pop-up displays

the hosts to migrate from and to:

You do not need to check the boxes to restart and deploy client configuration at the start of the repair process. You restart and deploy the client configuration manually after role migration is complete.

Do not attempt repair for non-highly available master roles. The Cloudera Manager Role Migration wizard only works for high availability HDFS roles.

Step 1: Create a new instance in Altus Director

- In Altus Director, click the cluster name and click Modify Cluster.

- Click the checkbox next to the IP address of the failed instance (containing the HDFS NameNode and collocated Failover Controller, and possibly a JournalNode). Click Repair.

- Click OK. You do not need to select Restart Cluster at this time, because you will restart the cluster after migrating the HDFS master roles.

Altus Director creates a new instance on a new host, installs the Cloudera Manager agent on the instance, and copies the Cloudera Manager parcels to it.

Step 2: Migrate roles and data in Cloudera Manager

In Cloudera Manager, open the cluster. On the Hosts tab, you see a new instance with no roles. The cluster is in an intermediate state, containing the new host to which the roles will be migrated and the old host from which the roles will be migrated.

Use the Cloudera Manager Migrate Roles wizard to move the roles.

See Moving Highly Available NameNode, Failover Controller, and JournalNode Roles Using the Migrate Roles Wizard in the Cloudera Administration guide.

Step 3: Delete the old instance in Altus Director

- In Altus Director, return to the cluster.

- Click Details. The message "Attention: Cluster requires manual role migration" is displayed. Click More Details.

- Check the box labeled, "I have manually migrated these roles."

- Click OK.

The failed instance is deleted from the cluster.