Operating System Known Issues

Known issues and workarounds related to operating systems are listed below.

Continue reading:

- Kerberos credentials cache issue on keyring-enabled configurations

- YARN failure due to Debian 8 kernel limitation

- Linux kernel security patch and CDH services crashes

- Some OS versions reject weak ciphers for TLS

- SUSE Linux Enterprise Server 12 (SLES12) and Kerberos Credential Cache Issue

- Random JVM Hangs on RHEL 6.6 (Kernels 3.14-3.17)

- Leap-Second Events

Kerberos credentials cache issue on keyring-enabled configurations

GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos tgt)]

Affected OS/version: 5.x

Cloudera Bug: CDH-70529

Workaround: Use a file-based Kerberos credentials cache configuration in krb5.conf. For details, refer to SUSE Linux Enterprise Server 12 (SLES12) and Kerberos Credential Cache Issue.

YARN failure due to Debian 8 kernel limitation

...DATE TIME FATAL NodeManager Error starting NodeManager org.apache.hadoop.yarn.exceptions.YarnRuntimeException: Failed to initialize container executor at org.apache.hadoop.yarn.server.nodemanager.NodeManager.serviceInit(NodeManager.java:269) at org.apache.hadoop.service.AbstractService.init(AbstractService.java:163) ...

Bug: Debian 805498

Cloudera Bug: CDH-57554

Linux kernel security patch and CDH services crashes

A fatal error has been detected by the Java Runtime Environment: SIGBUS (0x7) at pc=0x00007fe91ef6cebc, pid=30321, tid=0x00007fe930c67700

Cloudera services for HDFS and Impala cannot start after applying the patch.

Commonly used Linux distributions are shown in the table below. However, the issue affects any CDH release that runs on RHEL, CentOS, Oracle Linux, SUSE Linux, or Ubuntu and that has had the Linux kernel security patch for CVE-2017-1000364 applied.

If you have already applied the patch for your OS according to the advisories for CVE-2017-1000364, apply the kernel update that contains the fix for your operating system (some of which are listed in the table). If you cannot apply the kernel update, you can workaround the issue by increasing the Java thread stack size as detailed in the steps below.

| Distribution | Advisories for CVE-2017-1000364 | Advisory updates |

|---|---|---|

| Oracle Linux 6 | ELSA-2017-1486 | Oracle has fixed this problem in ELSA-2017-1723. |

| Oracle Linux 7 | ELSA-2017-1484 | Oracle has also added the fix for Oracle Linux 7 in ELBA-2017-1674. |

| RHEL 6 | RHSA-2017-1486 | RedHat has fixed this problem for RHEL 6, marked this as outdated and superseded by RHSA-2017-1723. |

| RHEL 7 | RHSA-2017-1484 | RedHat has fixed this problem for RHEL 7 and has marked this patch as outdated and superseded by RHBA-2017-1674. |

| SUSE | CVE-2017-1000364 | SUSE has also fixed this problem and the patch names are included in this same advisory. |

| Ubuntu 14.04 | USN-3335-1 | Fixed in USN-3338-2. |

Workaround

If you cannot apply the kernel update, you can set the Java thread stack size to -Xss1280k for the affected services using the appropriate Java configuration option or the environment advanced configuration snippet, as detailed below.

For role instances that have specific Java configuration options properties:

- Log in to Cloudera Manager Admin Console.

- Select , and then click the Configuration tab.

- Type java in the search field to display Java related configuration parameters. The Java Configuration Options for Catalog Server property field displays. Type -Xss1280k in the entry field, adding to any existing settings.

- Click Save Changes.

- Navigate to the HDFS service by selecting .

- Click the Configuration tab.

- Click the Scope filter DataNode. The Java Configuration Options for DataNode field displays among the properties listed. Enter -Xss1280k into the field, adding to any existing properties.

- Click Save Changes.

- Select the Scope filter NFS Gateway. The Java Configuration Options for NFS Gateway field displays among the properties listed. Enter -Xss1280k into the field, adding to any existing properties.

- Click Save Changes.

- Restart the affected roles (or configure the safety valves in next section and restart when finished with all configurations).

For role instances that do not have specific Java configuration options:

- Log in to Cloudera Manager Admin Console.

- Select , and then click the Configuration tab.

- Click the Scope filter Impala Daemon and Category filter Advanced.

- Type impala daemon environment in the search field to find the safety valve entry field.

- In the Impala Daemon Environment Advanced Configuration Snippet (Safety Valve), enter:

JAVA_TOOL_OPTIONS=-Xss1280K

- Click Save Changes.

- Click the Scope filter Impala StateStore and Category filter Advanced.

- In the Impala StateStore Environment Advanced Configuration Snippet (Safety Valve), enter:

JAVA_TOOL_OPTIONS=-Xss1280K

- Click Save Changes.

- Restart the affected roles.

The table below summarizes the parameters that can be set for the affected services:

| Service | Settable Java Configuration Option |

|---|---|

| HDFS DataNode | Java Configuration Options for DataNode |

| HDFS NFS Gateway | Java Configuration Options for NFS Gateway |

| Impala Catalog Server | Java Configuration Options for Catalog Server |

| Impala Daemon | Impala Daemon Environment Advanced Configuration Snippet (Safety Valve) |

| JAVA_TOOL_OPTIONS=-Xss1280K | |

| Impala StateStore | Impala StateStore Environment Advanced Configuration Snippet (Safety Valve) |

| JAVA_TOOL_OPTIONS=-Xss1280K |

Cloudera Bug: CDH-55771

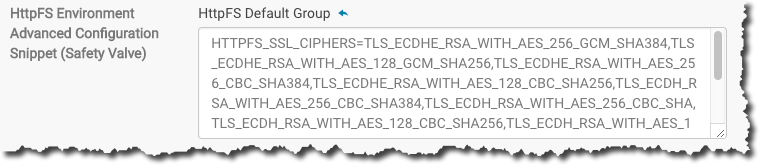

Some OS versions reject weak ciphers for TLS

For some OS configurations, the curl command cannot connect to certain CDH services that run on Apache Tomcat when the cluster has been configured for TLS/SSL. Specifically, HttpFS, KMS, Oozie, and Solr services reject connection attempts because the default cipher configuration uses weak temporary server keys (based on Diffie-Hellman key exchange protocol).

Affected OS/version: Linux distributions that use network security services (nss) library version 3.28.4-3.el6_9, including Oracle Linux 6.x, RHEL 6.x, CentOS 6.x. These conflict with Tomcat 6.0.48, which is included with CDH 5.11. The issue has been resolved in CDH 5.11.1.

Workaround: Override the default cipher configuration using all the ciphers from the list below. Only commas separate each cipher, and there are no line endings or other characters in this list.

- For clusters not managed by Cloudera Manager, enter this list in the appropriate configuration file for the service (see the "Not managed" column in the table below). Restart the

service.

TLS_ECDHE_RSA_WITH_AES_256_GCM_SHA384,TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256,TLS_ECDHE_RSA_WITH_AES_256_CBC_SHA384,TLS_ECDHE_RSA_WITH_AES_128_CBC_SHA256,TLS_ECDH_RSA_WITH_AES_256_CBC_SHA384,TLS_ECDH_RSA_WITH_AES_256_CBC_SHA,TLS_ECDH_RSA_WITH_AES_128_CBC_SHA256,TLS_ECDH_RSA_WITH_AES_128_CBC_SHA,TLS_ECDH_RSA_WITH_3DES_EDE_CBC_SHA,TLS_RSA_WITH_AES_256_CBC_SHA256,TLS_RSA_WITH_AES_256_CBC_SHA,TLS_RSA_WITH_AES_128_CBC_SHA256,TLS_RSA_WITH_AES_128_CBC_SHA,TLS_RSA_WITH_3DES_EDE_CBC_SHA

- For clusters managed by Cloudera Manager, follow the steps below.

| Cluster managed by Cloudera Manager | Not managed | ||

|---|---|---|---|

| Service | Configuration property | Environment variable | Configuration file |

| HttpFS | HttpFS Environment Advanced Configuration Snippet (Safety Valve) | HTTPFS_SSL_CIPHERS | /etc/hadoop-httpfs/conf/httpfs-env.sh |

| KMS | Key Management Server Environment Advanced Configuration Snippet (Safety Valve) | KMS_SSL_CIPHERS | /etc/hadoop-kms/conf/kms-env.sh |

| Oozie | Oozie Server Environment Advanced Configuration Snippet | OOZIE_HTTPS_CIPHERS | /etc/oozie/conf/oozie-env.sh |

| Solr | Solr Server Environment Advanced Configuration Snippet | SOLR_CIPHERS_CONFIG | /etc/default/solr |

To override the ciphers on Cloudera Manager clusters:

- Log into Cloudera Manager Admin Console.

- Search for the advanced configuration snippet name ("Configuration property") for each respective service role.

- Enter the appropriate environment variable in the entry field.

- Copy the list from here:

TLS_ECDHE_RSA_WITH_AES_256_GCM_SHA384,TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256,TLS_ECDHE_RSA_WITH_AES_256_CBC_SHA384,TLS_ECDHE_RSA_WITH_AES_128_CBC_SHA256,TLS_ECDH_RSA_WITH_AES_256_CBC_SHA384,TLS_ECDH_RSA_WITH_AES_256_CBC_SHA,TLS_ECDH_RSA_WITH_AES_128_CBC_SHA256,TLS_ECDH_RSA_WITH_AES_128_CBC_SHA,TLS_ECDH_RSA_WITH_3DES_EDE_CBC_SHA,TLS_RSA_WITH_AES_256_CBC_SHA256,TLS_RSA_WITH_AES_256_CBC_SHA,TLS_RSA_WITH_AES_128_CBC_SHA256,TLS_RSA_WITH_AES_128_CBC_SHA,TLS_RSA_WITH_3DES_EDE_CBC_SHA

- Paste as the setting for the environment variable. For example, overriding HttpFS is as follows:

- Restart the service.

Cloudera Bugs: CDH-52109, CDH-49236

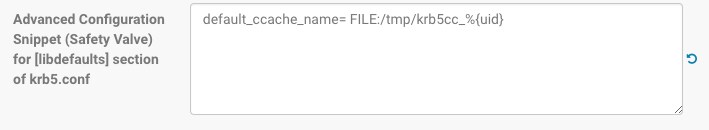

SUSE Linux Enterprise Server 12 (SLES12) and Kerberos Credential Cache Issue

Credential cache directory /run/user/uid does not exist

The issue is caused by a change in SLES12 systemd and the location of Kerberos credentials cache for user accounts. This issue may emerge when password-less accounts, such as impala or flume, for example, are executed using sudo -u user or su - user and the system attempts to retrieve the credential from the cache.

Workaround:

[libdefaults]

default_ccache_name = FILE:/tmp/krb5cc_%{uid}

...

For clusters managed by Cloudera Manager Server:

- Log in to Cloudera Manager Admin Console.

- Click the Administration tab and select Settings from the drop-down menu.

- Select Kerberos for the Category filter.

- Type the word "safety" in the Search field to find the Advanced Configuration Snippet (Safety Valve) settings for Kerberos.

- Enter the name=value pair for the property as shown here:

- Click Save Changes.

Cloudera Bugs: OPSAPS-34167, OPSAPS-41158

Random JVM Hangs on RHEL 6.6 (Kernels 3.14-3.17)

User Java processes—HBase RegionServers, HDFS DataNodes—can deadlock and hang for no apparent reason.

Impact: A Java application or daemon hangs. A jstack, or attaching a debugger, can unblock the process.

Workaround:

Upgrade the operating system to one with kernel version 3.18 or later; for example, RHEL 6.6z and later. For information on checking your RHEL kernel version, see http://www.cyberciti.biz/faq/centsos-redhat-rhel-6-kernel-version/. In RHEL, the issue has been fixed recently in kernel-2.6.32-504.16.2.el6 update (April 21, https://rhn.redhat.com/errata/RHSA-2015-0864.html). The following example shows how to check for the fix:

% rpm -qp --changelog kernel-2.6.32-504.16.2.el6.x86_64.rpm | grep 'Ensure get_futex_key_refs() always implies a barrier'

Other distributions running kernel versions 3.14-3.17 may be affected.

For more information, see https://groups.google.com/forum/#!topic/mechanical-sympathy/QbmpZxp6C64

Cloudera Bug: TSB-63

Leap-Second Events

Impact: After a leap-second event, Java applications (including CDH services) using older Java and Linux kernel versions, may consume almost 100% CPU. See https://access.redhat.com/articles/15145.

Leap-second events are tied to the time synchronization methods of the Linux kernel, the Linux distribution and version, and the Java version used by applications running on affected kernels.

Although Java is increasingly agnostic to system clock progression (and less susceptible to a kernel's mishandling of a leap-second event), using JDK 7 or 8 should prevent issues at the CDH level (for CDH components that use the Java Virtual Machine).

Immediate action required:

(1) Ensure that the kernel is up to date.

-

RHEL5/6/7, CentOS 5/6/7 - 2.6.32-298 or higher

-

Ubuntu Trusty 14.04 LTS - No action required

-

Ubuntu Precise 12.04 LTS - 3.2.0-29.46 or higher

-

Debian Jessie (8.x)- No action required

-

Debian Wheezy (7.x) - 3.2.29-1 or higher

-

Oracle Enterprise Linux (OEL) - Kernels built in 2013 or later

-

SLES11 (SP2, SP3 and SP4)

-

SP2 - 3.0.38-0.5 or higher

-

SP3 and SP4 - No action required

-

-

SLES12 - No action required.

-

Java 7 - At least jdk-1.7.0_65

-

Java 8 - No action required.

(3) Ensure that your systems use either NTP or PTP synchronization.

For systems not using time synchronization, update both the OS tzdata and Java tzdata packages to the tzdata-2016g version, at a minimum. For OS tzdata package updates, contact OS support or check updated OS repositories. For Java tzdata package updates, see Oracle's Timezone Updater Tool.

Cloudera Bugs: CDH-44788, TSB-189