Cloudera launches AI Inference Service, with NVIDIA NIM, accelerating GenAI development. Get the details

The freedom data science teams need delivered by a cloud-native service that works for IT.

Cloudera AI (formerly Cloudera Machine Learning) enables enterprise data science teams to collaborate across the full data lifecycle with immediate access to secure, trusted data pipelines, scalable compute resources, and preferred tools. Streamline the process of operationalizing analytics at scale and integrate advanced capabilities like retrieval-augmented generation (RAG), multi-agent workflows, and Accelerators for Machine Learning Projects (AMPs).

Cloudera AI optimizes workflows across your business with native and robust tools for deploying, serving, and monitoring models. With extended SDX for models, govern and automate model cataloging and then seamlessly surface results to collaborate across Cloudera Data Services, including Cloudera Data Warehouse and Cloudera Operational Database.

Optimize the AI data lifecycle by leveraging AMPs and RAG Studio, ensuring models and agents deliver trustworthy results. Transparent, repeatable workflows empower teams to innovate quickly.

Empower data science teams with scalable inference services and agent-based orchestration, maintaining stringent security and governance standards. Achieve agility and efficiency without compromise.

Cloudera AI use cases

- TAKE AI FROM CONCEPT TO REALITY

- SCALE MACHINE LEARNING WITH MLOPS

- ENABLE EXPLORATORY DATA SCIENCE

Take AI from concept to reality

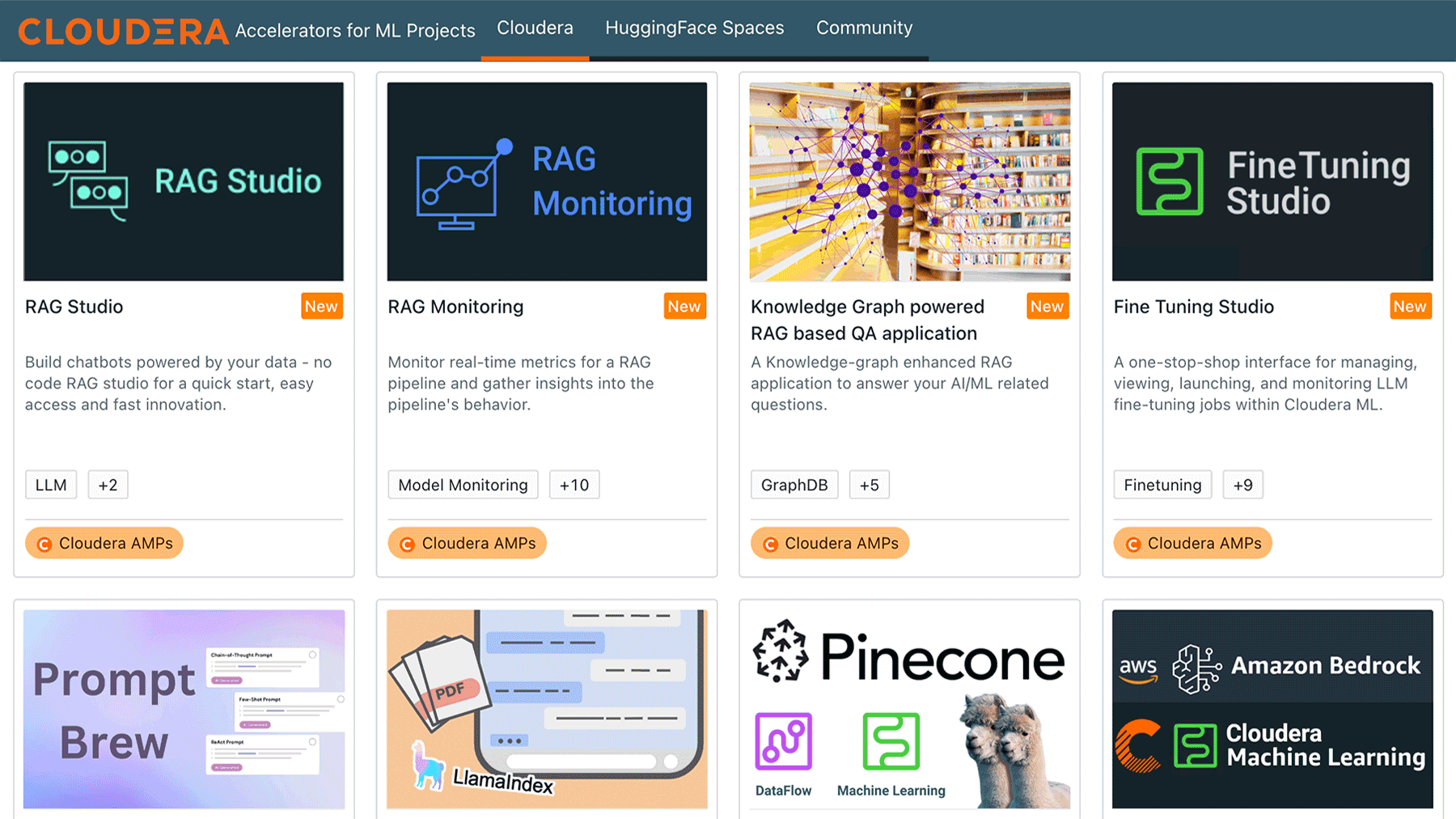

Reduce your time to value with Accelerators for Machine Learning Projects (AMPs).

AMPs are designed to jumpstart your AI initiatives by providing tailored solutions for specific use cases within Cloudera AI. They enable users to quickly achieve success by offering high-quality, pre-built reference examples that can be easily adapted to your unique requirements, reducing time to value for your projects and boosting business impact.

Personalizing recommendations to millions and improving anti-money laundering detection

1M+ personalized ML recommendations saving relationship managers 1,000+ hours in manual analysis.

Scale machine learning with MLOps

Benefit from improved transparency, collaboration, and ROI with MLOps.

MLOps helps establish continuous improvement, ensuring models stay current, while agentic workflows adapt to changing conditions. Maintain data security, compliance, and lifecycle governance for all AI workloads.

Enabling the digital lifestyle of mobile customers with a modern analytic environment

600PB of mobile data volume managed.

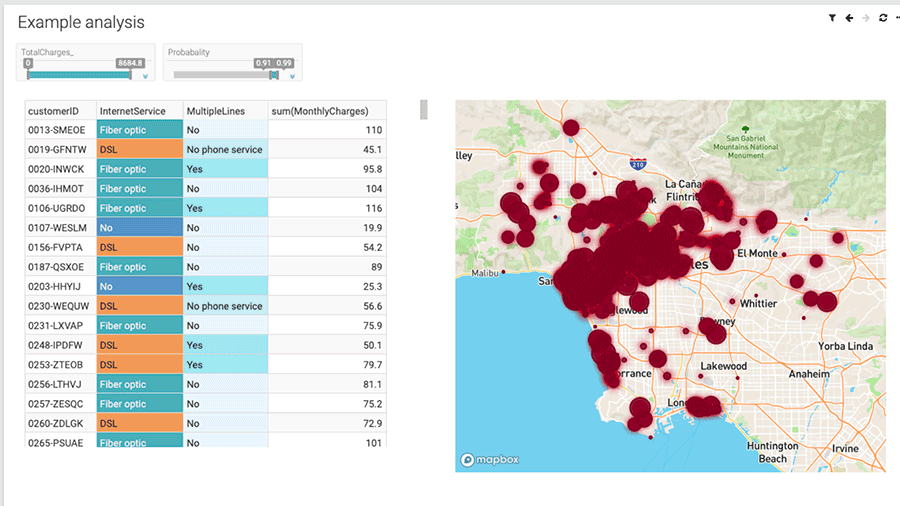

Enable exploratory data science

Compress the space between data exploration and actionable insights with dynamic, context-rich analytics.

Leverage certified datasets, integrated visualization, and RAG-enabled search to quickly uncover patterns, refine hypotheses, and inform decisions—all within a unified environment.

Increasing prediction accuracy by four times to accelerate the pace of discovery

1 million sub-second queries performed on a 2PB data set.

Deploy workspaces in a few clicks, giving data science teams access to the project environments and automatically elastic compute resources for integrated development, training or fine-tuning, and model inference of any size for end-to-end enterprise AI.

With Cloudera AI, administrators and data science teams have full visibility from data source to production environment—enabling transparent workflows and easy collaboration across teams securely.

Data scientists shouldn't have to switch between tools to discover, query, and visualize data sets. Cloudera AI provides an integrated environment enhanced by AI Assistants, offering a single UI for all your exploratory and contextualized AI-driven data science needs.

AMPs are Cloudera AI projects that can be deployed with one click directly from Cloudera AI. AMPs enable data scientists to go from an idea to a fully working use case in a fraction of the time. They provide an end-to-end framework for building, deploying, and monitoring business-ready AI applications instantly.

Cloudera AI’s MLOps capability enables one-click model deployment, model cataloging, and granular prediction monitoring to keep models secure and accurate across production environments.

Deliver insights with a consistent and easy-to-use experience, featuring intuitive and accessible drag-and-drop dashboards and custom application creation augmented by AI assistants.

Cloudera AI deployment options

Deploy Cloudera AI anywhere with a cloud-native, portable, and consistent experience everywhere.

Cloudera on cloud

- Multi-cloud ready: Avoid lock-in and leverage AI Inference, agents, and AMPs with data from anywhere.

- Scalable: Scale compute resources dynamically, only paying for what you use.

- Full lifecycle integration: Seamlessly and securely share workloads and outputs across Cloudera experiences including Cloudera Data Engineering and Cloudera Data Warehouse.

Cloudera on premises

- Cost-effective: Optimize resource use for complex contextual modeling and AI workflows.

- Optimized performance: Meet SLAs with workload isolation and multi-tenancy.

- Collaborate effectively: Securely share workloads, data, models, and results across teams at every stage of the data lifecycle.

What customers say about Cloudera AI

“Integrates seamlessly with the other Cloudera experiences, enabling rapid realization of insights from your data. I especially appreciate the flexibility and openness.”

Analytics Solution Architect

Energy and Utilities Industry

“One-stop-shop for your data science needs. Managing multiple sessions, automating data pipeline jobs, and even creating machine learning apps are all easy and intuitive.”

Model Development Expert

Services Industry

“Excellent platform for any type of ML and Data engineering project. Provides an easy path to develop and test code as well as ML performance tracking.”

Big Data And Analytic Architect

Miscellaneous Industry