Your foundation for building real-time applications.

Cloudera Streaming is designed for mission-critical applications. Using Apache Kafka and Apache Flink for real-time data processing, it handles millions of events per second with low latency and guaranteed consistency.

Accelerate application development by enabling teams to easily build reliable pipelines for real-time ML models and advanced predictive analytics.

Develop, test, and deploy streaming applications consistently, securely, and with governance across on-premises or cloud environments.

Build faster, more secure data management and analytics applications with optimal performance and scalability, anywhere.

Enable the applications that power your business.

-

Real-time fraud detection

Build microservices that analyze transaction streams in real time.

-

Dynamic customer experiences

Build event-driven applications that act on interaction data the instant it’s created.

-

Predictive maintenance and optimized industrial processes

Build applications that ingest and analyze high-volume data from connected devices.

-

Enterprise-grade security

Secure and govern the data lifecycle via integrations with the larger Cloudera platform.

-

Real-time fraud detection

Build microservices that analyze transaction streams in real time.

-

Dynamic customer experiences

Build event-driven applications that act on interaction data the instant it’s created.

-

Predictive maintenance and optimized industrial processes

Build applications that ingest and analyze high-volume data from connected devices.

-

Enterprise-grade security

Secure and govern the data lifecycle via integrations with the larger Cloudera platform.

Create in-the-moment offers, dynamic recommendations, and proactive service applications with Cloudera SQL Stream Builder.

Empower analysts to use SQL on live Flink streams, enabling real-time personalization and instant next-best-action decisions for customers.

Cloudera SDX protects all data in motion, ensuring data quality and consistency.

Provide operations teams with comprehensive monitoring tools that let them control data access and governance.

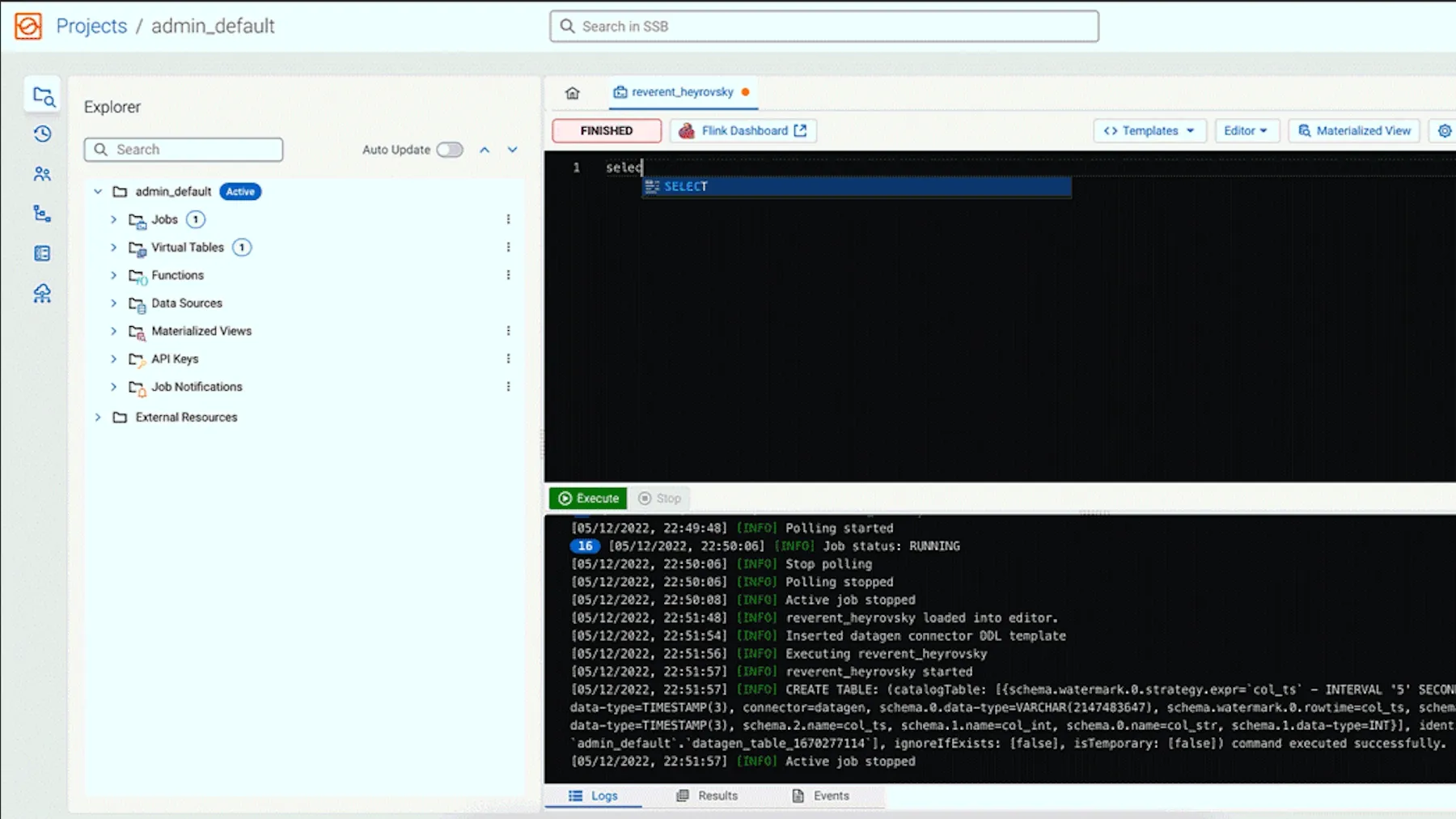

An intuitive interface for building, testing, and managing Flink SQL jobs. It accelerates development cycles and empowers a wider range of technical users to create robust streaming applications.

A platform built on the industry-leading stream processing engine, Apache Flink, Cloudera Streaming Analytics provides the power and resilience your team's mission-critical application demands.

A robust, scalable, and secure messaging backbone for your real-time applications with Apache Kafka, Cloudera Streams Messaging provides a reliable data transport layer for the most demanding development workloads.

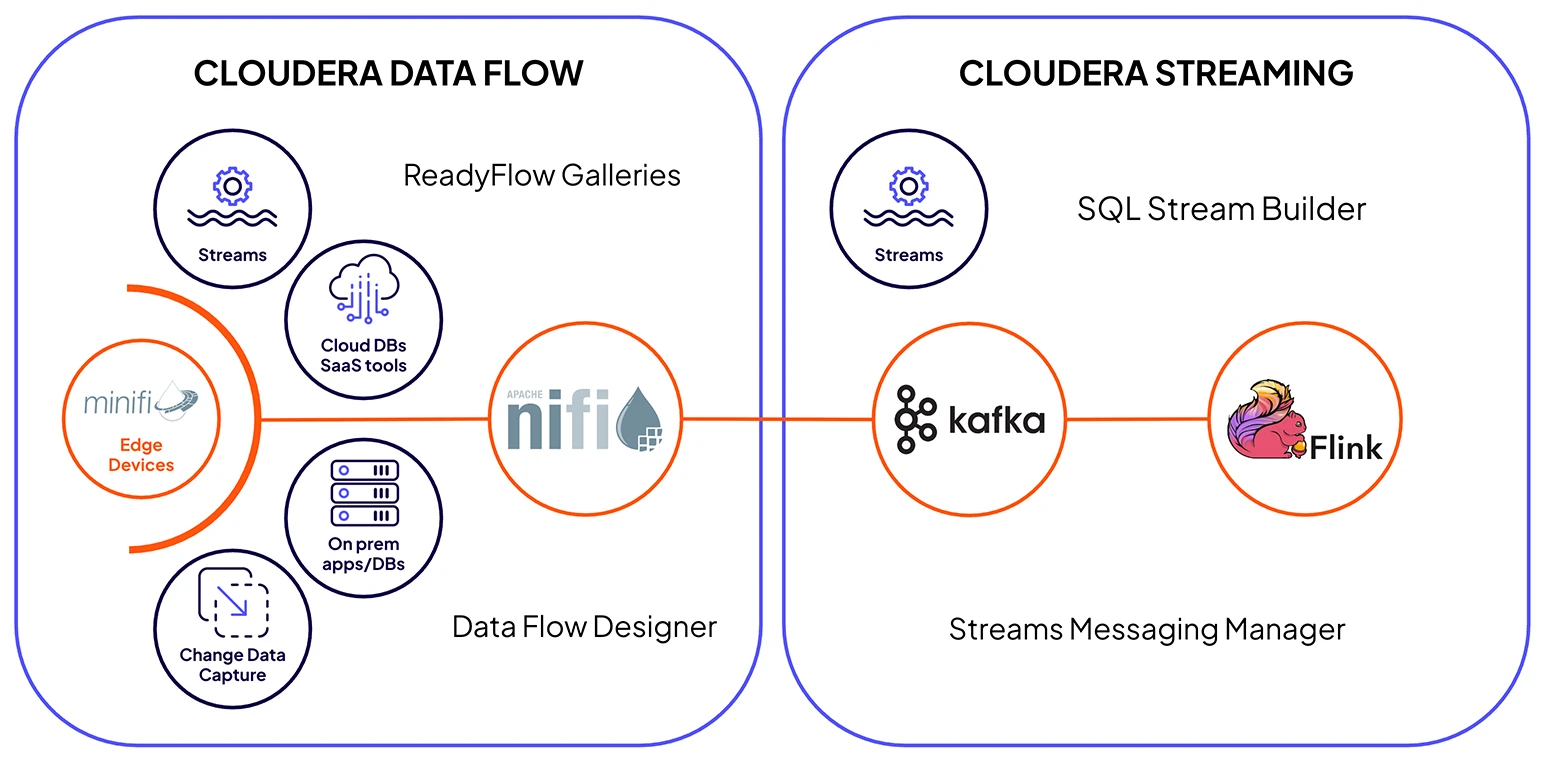

Cloudera Streaming, coupled with Cloudera Data Flow, delivers integrated, enterprise-grade components, using NiFi for secure ingestion, Kafka for resilient transit, and Flink for real-time processing across the full data lifecycle.

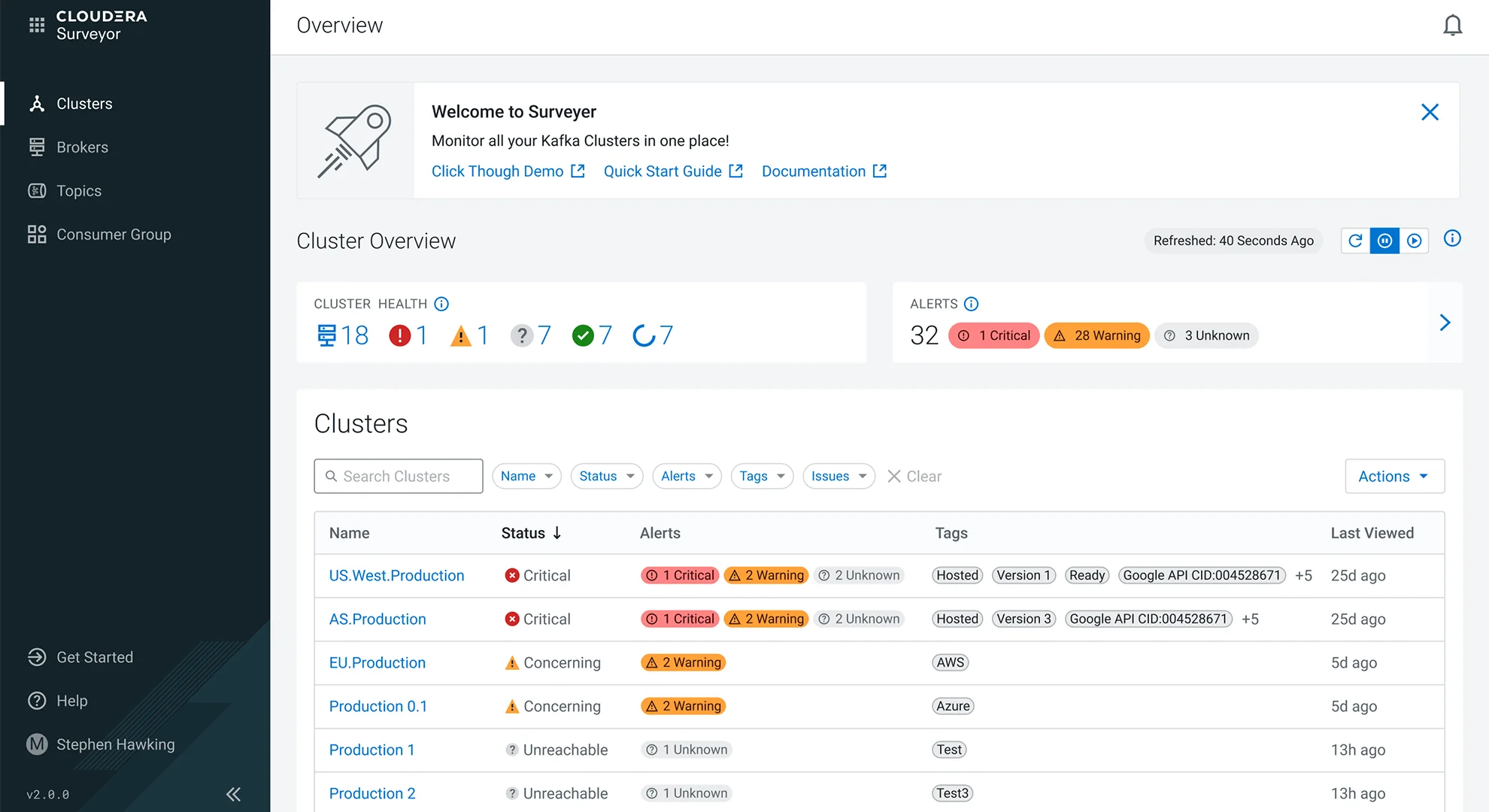

Cloudera Surveyor offers a single pane of glass for Kafka observability on Kubernetes, simplifying operations and ensuring the health of the application data backbone. The Schema Registry provides a centralized, versioned repository for managing data schemas.

Cloudera Streaming drives real value across industries.

public sector

IRS

public sector

IRS

financial services

Banco Santander Spain

financial services

Banco Santander Spain

manufacturing and automotive

Dell

manufacturing and automotive

Dell

A flexible foundation to build and deploy anywhere.

For public cloud apps

Enable your teams to build and scale their real-time applications on their public cloud platform of choice.

On on-premises apps

Deploy as part of your private cloud to give developers maximum control over latency and resources.

As operator for Kubernetes

Empower DevOps to offer Flink and Kafka independently in a conformant Kubernetes cluster to maximize agility and self-service.

Take the next step

See how real-time insights can transform for your organization with Cloudera Streaming.

Explore more products

Ingest, process, and analyze real-time structured and unstructured data anywhere it lives for immediate insight, action, and AI.

Fuel real-time analytics by collecting, curating, and analyzing streaming data in motion from any source.

Make smart decisions with a flexible platform that processes any data, anywhere, for actionable analytics and trusted AI.

Manage, control, and monitor data from edge devices with real-time collection and processing at the edge.

Accelerate data-driven decision making from research to production with a secure, scalable, and open platform for enterprise AI.