We’ve entered a new phase of AI adoption: 88% of enterprise AI projects stall before reaching production, not because of poor ideas or weak models, but because infrastructure can’t keep up. Cloud APIs get expensive fast. Governance is an afterthought. Latency adds up. And for regulated industries, moving sensitive data to a public endpoint is just not an option.

Closing the gap between an AI pilot and full-scale production requires bringing intelligence directly to the source. Cloudera AI Inference service gives enterprise teams a secure, performant, and cost-effective production model serving layer—running directly where the data lives.

Instead of sending your data to the cloud as context for models, Cloudera brings the models to you—unblocking intelligence exactly where it’s needed, securing it by design, and scaling it confidently behind your own firewall.

3 Reasons Why Bringing AI to Your Data is Important: Privacy, Cost, and Choice at Scale

Keep Data Private and Protected

Most AI services require you to send data to the cloud, creating risks around compliance, cost, and latency. Cloudera takes the approach to bring models to where your data already lives. Whether it’s in a secure virtual private cloud (VPC), or within an air-gapped (fully offline and isolated) on-premises environment, this model-to-data strategy ensures your information stays private and governed, while still enabling high-performance inference to power AI in production.

Predictable Economics in the Long Run

Running AI in the cloud 24/7 leads to spiraling, unpredictable expenses. These per-request fees create a budget that fluctuates with usage, making long-term forecasting difficult. By shifting inference to infrastructure the organization already owns and controls, teams can bypass these external usage fees. Once AI moves into steady-state production, costs become more predictable, allowing for a higher return on investment as workloads scale.

Control and Choice

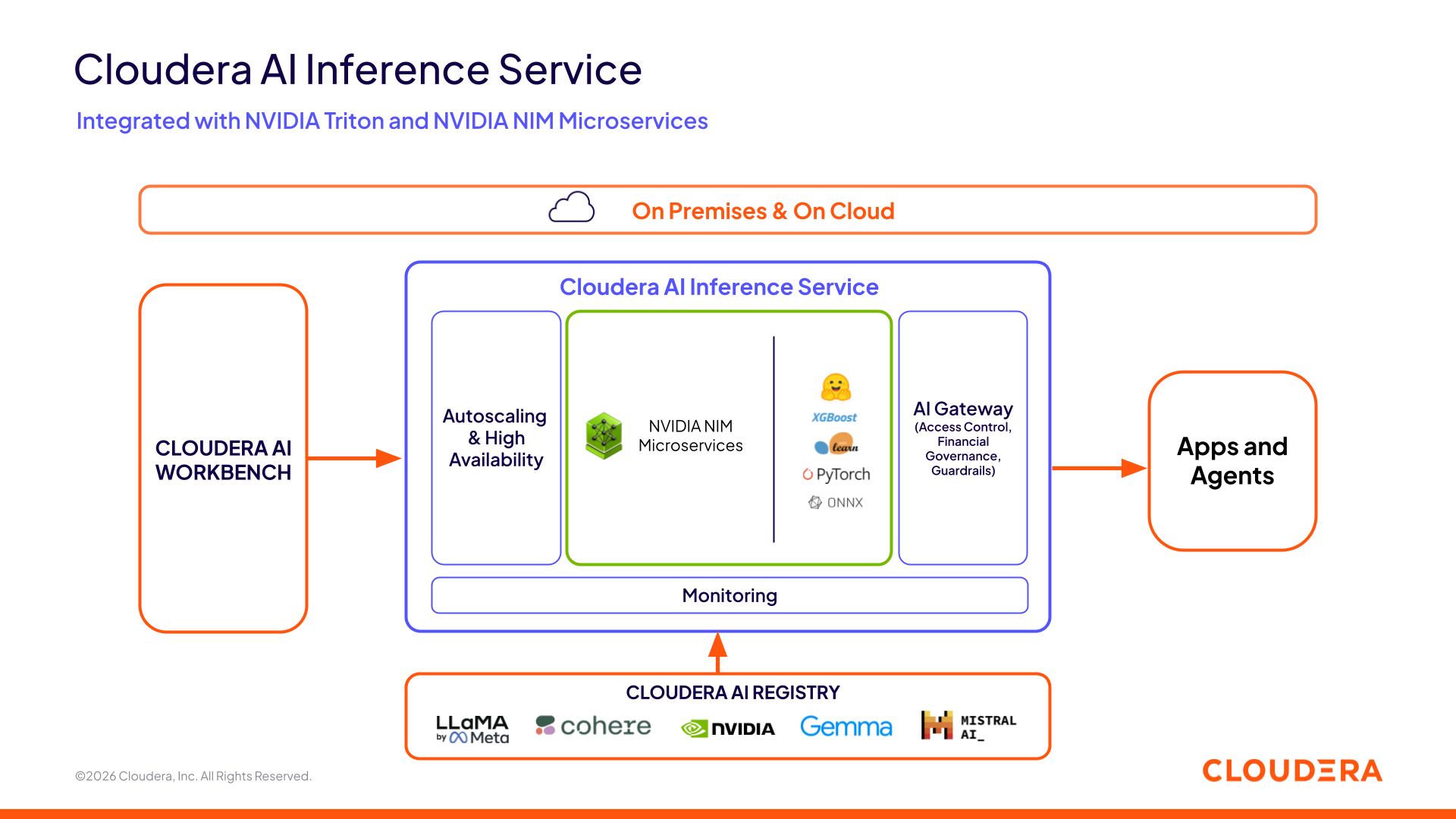

Most cloud AI providers steer customers into their proprietary ecosystem, making it hard to switch, extend, or fully control your models. With Cloudera AI Inference service, you can deploy a wide range of AI capabilities, from open-source GenAI LLMs like NVIDIA’s Nemotron to traditional predictive models, without giving up control or ownership of your intellectual property. Accelerated by the NVIDIA AI stack—NVIDIA Blackwell GPUs, NVIDIA Dynamo-Triton, and NVIDIA NIM microservices for high-performance, scalable model serving—Cloudera AI Inference service lets you innovate freely while keeping your AI infrastructure flexible, portable, and future-proof.

Figure 1: Cloudera AI Inference Service Architecture

Figure 1: Cloudera AI Inference Service Architecture

Success Stories: Early Adoption of Cloudera AI Inference Service On Premises

Cloudera AI Inference service is unlocking new AI use cases in places where the cloud can’t go: offline environments, sovereign infrastructure, and latency-critical operations. Here are three real-world scenarios now enabled by Cloudera AI Inference service and already underway with early adopters.

National Security: Air-Gapped Intelligence That Never Sleeps or Leaks

In national defense, speed and security are non-negotiable. But until recently, intelligence officers spent thousands of hours manually sifting through sensitive, offline documents—slowed by process, overwhelmed by volume, and unable to leverage public AI tools without risking exposure.

Now, with Cloudera AI Inference service running inside air-gapped environments, defense agencies can deploy powerful LLM assistants that scan and summarize massive document collections in seconds. These models operate entirely offline: no internet, no cloud dependencies, no data leakage, helping analysts make faster decisions without compromising security.

Global Finance: Instant Operations, Zero Data Exposure

Cross-border finance lives in dozens of languages. Previously, translating documents like contracts, fraud reports, or compliance updates meant using external tools, raising serious concerns over data exposure and auditability.

Today, one of the top global credit card providers is exploring Cloudera AI Inference service and testing on-premises deployment of multilingual models to translate sensitive communications across more than 200 markets in real time, and fully under internal control. By running inference on their own infrastructure, they’re unlocking faster internal operations and customer response times, while avoiding the compliance risks of third-party APIs.

Public Sector: AI Agents for Every Employee

Government agencies are under pressure to serve more people, faster—yet employees often rely on outdated portals and dense policy manuals. Public GenAI tools aren’t an option due to privacy mandates and unpredictable costs.

Early implementations of Cloudera AI Inference service are supporting on-premises AI chatbots trained on internal agency documentation. These agents help staff and constituents navigate complex topics with speed and confidence, delivering answers instantly, while maintaining full control over the data, prompts, and outputs.

Looking Ahead: The Future of AI is Anywhere Data Lives

By bringing the model to where your data lives, Cloudera AI Inference service is helping organizations scale intelligence on their own terms—with predictable cost and flexibility to choose from a wide range of production models. Whether you’re navigating air-gapped security mandates or optimizing high-volume global operations, the path to production-grade AI is now open.

Cloudera AI is the trusted foundation for building, deploying, and governing all types of AI—from generative and agentic AI to traditional machine learning—across your data estate.

Ready to scale? Don’t let infrastructure limit the AI strategy. Visit the Cloudera AI Inference service webpage for use case demos, learn more about it in this webinar, or book a demo to see how to turn “AI anywhere” into a reality.