In the current enterprise technology landscape, we’re witnessing an industry-wide scramble. As organizations shift from monolithic architectures to complex environments leveraging heterogeneous infrastructures, cloud-based data platforms are hitting a visibility—i.e., observability—wall. Their response has been a wave of reactive, multi-billion-dollar acquisitions designed to "bolt-on" the observability that they lack natively.

But observability shouldn't be a post-script or a line item from a recent merger—it must be a core capability. At Cloudera, we’re evolving our native observability DNA into a unified, hybrid-first powerhouse, proving that true insight across the entire data estate is a foundational requirement for a unified data fabric, open data lakehouse, data in motion, AI, and your data platform as a whole. This is true whether you run your apps, workloads, models, and agents in public clouds, on-premises in data centers, and at the edge.

The Multi-Faceted Nature of Observability: Beyond Simple Monitoring

True observability is not a single tool; it’s a foundational capability baked into the data platform to answer critical questions for every stakeholder across the data estate. Whether it’s a business analyst wondering why a dashboard hasn't refreshed, a database admin investigating a long-running query, or a system admin identifying skewed data storage across cluster nodes, observability must offer telemetry that’s integrated to provide immediate, actionable answers.

In the reality of hybrid and multi-cloud landscapes, relying on separate, single-purpose tools— for data quality, cloud performance, infrastructure health, and so on—that don’t operate across the entire data landscape doesn’t grant true visibility. Instead, it creates a data silo problem of disconnected islands of observed systems.

It’s the interplay between these systems (in data, workloads, resource utilization, etc.) that necessitates observability. When these categories are disconnected, organizations lose the deep context required for operational excellence. To achieve that level of insight requires visibility that links logs, metrics, and traces cohesively between the data layer and the underlying infrastructure, along with everything in between.

The Inevitable Complexity of the Hybrid AI Era

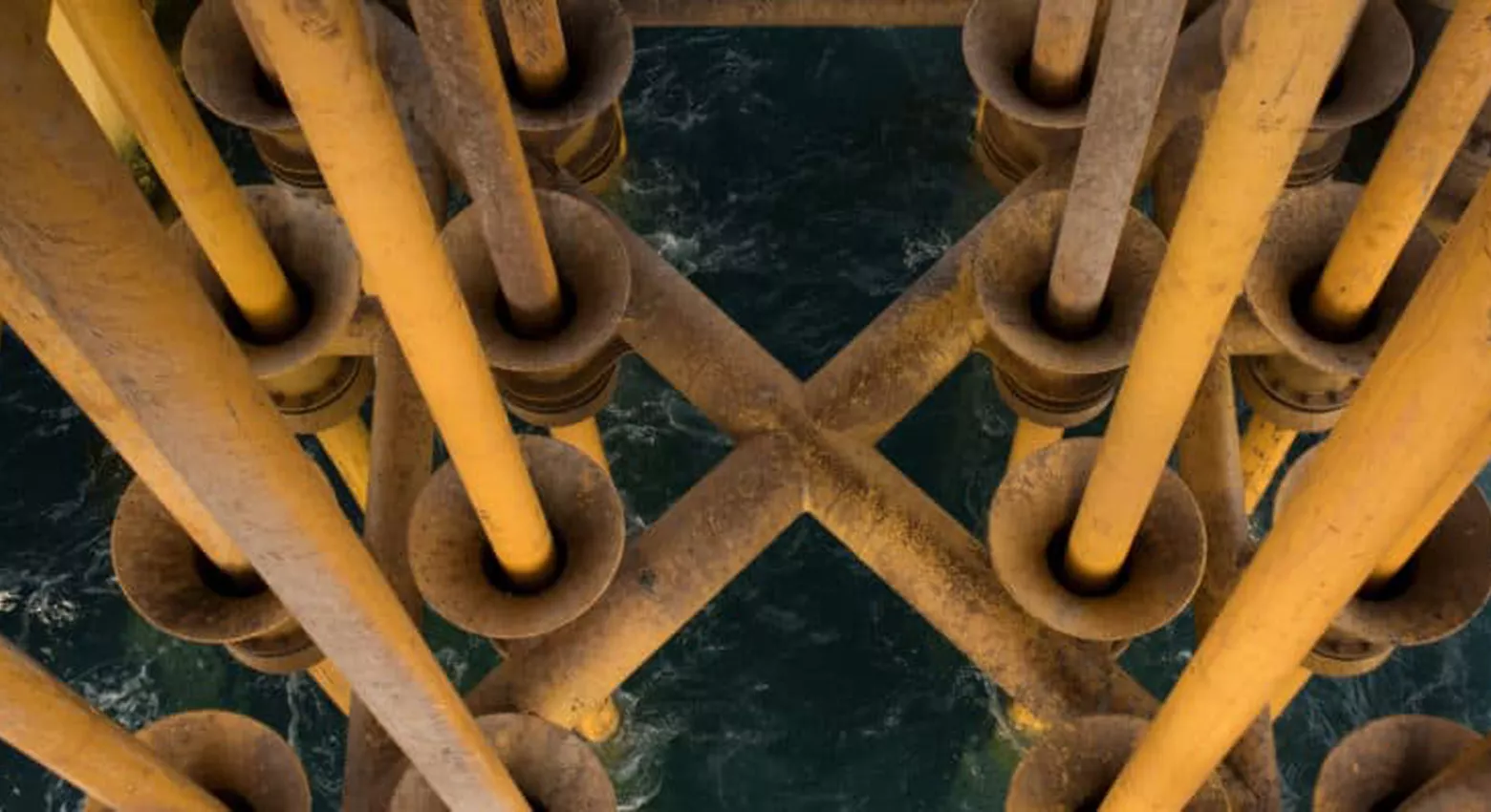

The rise of generative AI and large-scale modeling has fundamentally transformed hybrid architecture from a strategic choice into a technical necessity. AI workloads demand a delicate balance between massive cloud-scale compute for training and localized, on-premises data gravity for privacy and low-latency inference, leading the modern enterprise to become an intricate web of heterogeneous environments.

This shift toward a truly distributed footprint—spanning from the core data center to the public cloud and out to the edge—inherently magnifies complexity, as workloads behave differently both within and between these various infrastructures. This complexity makes it exponentially harder to get to the critical "why" behind performance lags, cost spikes, or consumption issues. In this hybrid AI era, system complexity without a unified view and telemetry becomes an unmanageable black box, leaving IT leaders unable to predict or prevent critical failures.

The "Bolt-On" Trap: Why Observability Cannot Be an Afterthought

There’s been a recent surge in cloud-based data providers acquiring observability startups: Snowflake acquiring Observe, Palo Alto Networks acquiring Chronosphere, and more. These multi-billion-dollar acquisitions show that when data platforms lack native observability, they eventually hit a "visibility wall." These providers are now attempting to bolt-on what should have been a core capability.

For the modern enterprise, a fragmented, cloud-only approach will not provide the visibility they need to achieve true operational excellence:

Cloud-only tools are restricted to a specific segment of the stack, ignoring the vast data estate existing outside the public cloud.

Tools with bolted-on observability struggle to provide the unified context needed to understand the cause of issues across complex hybrid environments. Customers frequently find themselves juggling disjointed interfaces for logs, metrics, and traces, which highlights a significant lack of cohesion between the data layer and the infrastructure supporting it.

Cloudera's Native and Unified Observability Capability

Cloudera Observability is a native, foundational capability that moves beyond simple monitoring to act as a unifying powerhouse. By positioning visibility as a foundational requirement, Cloudera provides total insight across the entire hybrid cloud: on-premises, public cloud, and at the edge. And by leveraging OpenTelemetry as the observability framework to collect and capture distributed traces and metrics, we’re aligned with the leading framework of observability standards.

Cloudera Observability delivers more than just the "why" behind performance; it provides a comprehensive cycle of insight. We’ve "bottled" the diagnostic intelligence gathered from more than 1.3 million nodes under subscription to create sophisticated diagnostic tools. Now, with the integration of Cloudera Cloud Factory (formerly known as Taikun CloudWorks), we’re best placed to extend these capabilities beyond cloud-native infrastructure management.

This evolution places predictive reliability firmly within reach for the modern enterprise, transforming maintenance from a cycle of reactive patching into a proactive strategy. By leveraging advanced warnings on known issues and security vulnerabilities, organizations can finally transcend traditional troubleshooting to achieve a state of continuous, reliable performance across their entire data estate.

Ultimately, observability is the only way to navigate the complexity of the hybrid AI era, through a data platform built with observability in its DNA. To learn more about how you can achieve true observability with Cloudera, reach out to our professional services team, check out our product demos, or sign up for a free 5-day trial.