Autonomous agents act toward complex goals without requiring human direction at each step. In enterprise environments, deploying these agents introduces a more exacting set of challenges: they must navigate heterogeneous data systems; satisfy compliance, audit, and data sovereignty mandates; and keep all data within the organization's operational boundary.

Long-horizon agents represent a new class of autonomous AI, extending beyond single tasks to pursue objectives across dozens of sequential decisions, running workflows for hours or days while maintaining context throughout. At enterprise scale, every one of those challenges is amplified.

An Architecture Built for Enterprise AI Agents

Cloudera designed Cloudera Agent Studio (part of Cloudera AI Studios) in collaboration with NVIDIA to address exactly these challenges.

NVIDIA Nemotron provides the model foundation: it’s purpose-built for agentic AI and the high-throughput inference demands of long-horizon workflows.

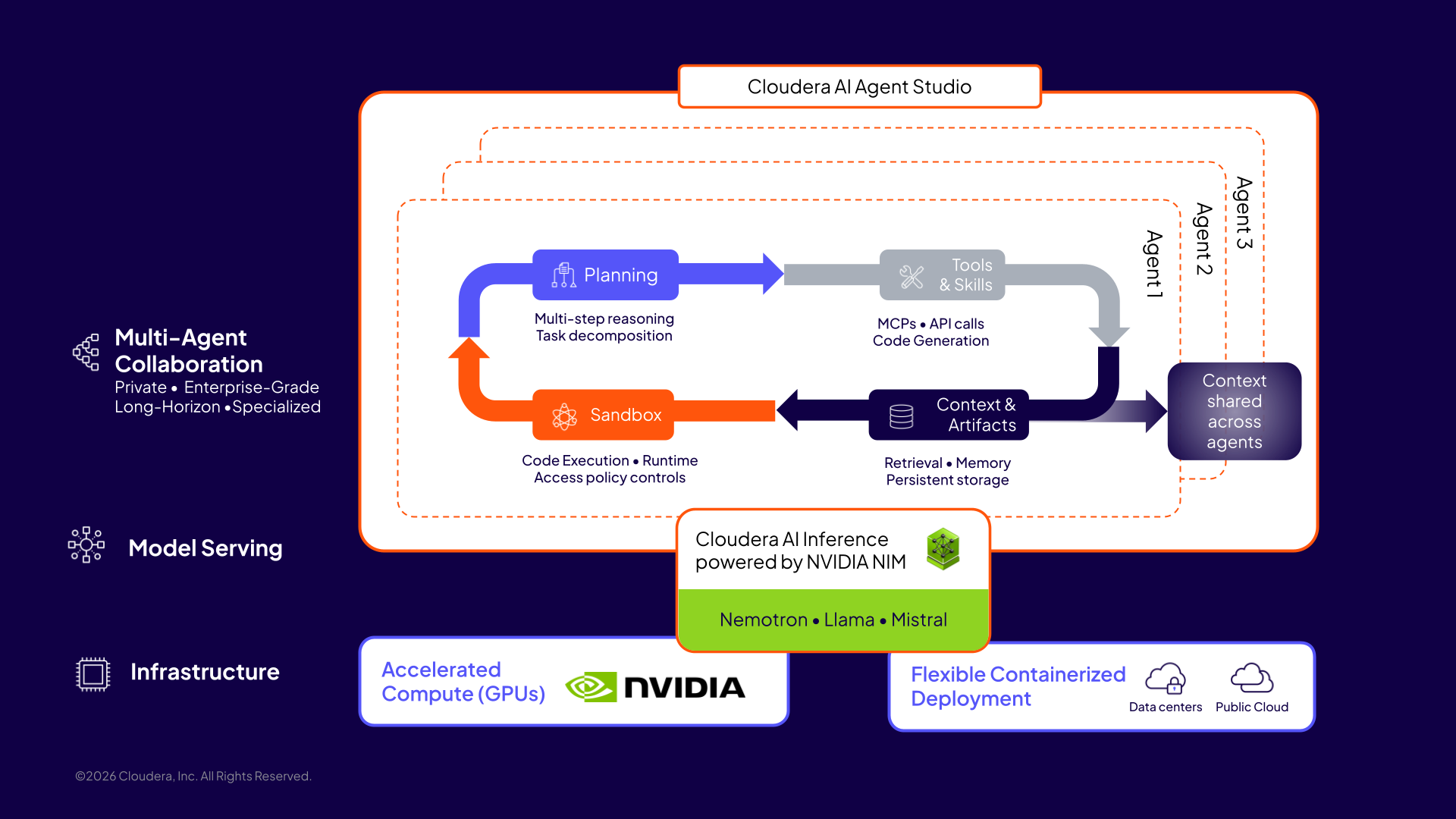

Cloudera Agent Studio provides the orchestration layer that builds on that foundation through four architectural pillars: dynamic multi-step planning, transparent multi-agent collaboration, context engineering for accuracy, and sandboxed execution. Each pillar addresses a specific requirement that emerges when autonomous agents operate at enterprise scale.

Figure 1: Cloudera Agent Studio orchestrates autonomous workflows through iterative multi-step planning, multi-agent collaboration with tools and skills, artifact-driven context engineering, and sandboxed execution—built on the foundation of model serving with Cloudera AI Inference, powered by NVIDIA NIM, and Nemotron models for agentic AI.

Figure 1: Cloudera Agent Studio orchestrates autonomous workflows through iterative multi-step planning, multi-agent collaboration with tools and skills, artifact-driven context engineering, and sandboxed execution—built on the foundation of model serving with Cloudera AI Inference, powered by NVIDIA NIM, and Nemotron models for agentic AI.

The Foundation: Private Model Deployment with NVIDIA Nemotron

Enterprise AI starts with data governance. Prompts, proprietary data, and model outputs must stay within the organization's operational boundary, meeting compliance mandates without architectural compromise. This is the core requirement of Private AI: the full inference stack running inside the enterprise, not outside it.

Cloudera AI Inference service, powered by NVIDIA NIM microservices, enables high-performance, scalable model serving directly within the enterprise environment, keeping prompts, data, and outputs inside the security perimeter. Accelerated by the NVIDIA AI stack, including Blackwell GPUs and Dynamo-Triton, the service supports a wide range of models, including NVIDIA’s Nemotron model family for agentic AI with advanced reasoning, tool use, and long-horizon workflows. This foundation allows organizations to build and run enterprise AI agents directly on their data—securely and at scale.

Four Pillars of Cloudera Agent Studio

1. Dynamic, Iterative, Multi-Step Planning

Enterprise data environments are not clean. Real deployments involve dozens of databases with inconsistent schemas, sparse documentation, and no deterministic path from a business question to the right data source. The agent must construct that path at runtime.

Agent Studio's orchestrator treats exploration as part of execution. It decomposes complex requests into multi-step plans, executes them iteratively, and self-evaluates after each step before committing to a path. This self-correcting planning loop makes agents reliable in environments they have never encountered and sustains long-horizon workflows across many sequential steps.

2. Multi-Agent Collaboration: Reusability and Transparency

Complex enterprise workflows span multiple domains, each requiring distinct reasoning strategies and specialized tools. A single agent attempting to cover all of them cannot be well-optimized for any, and the broader its scope, the harder it becomes to understand and govern agent behavior.

Agent Studio is built around specialized agents, each scoped to a specific domain and equipped with the appropriate tools, coordinated by an orchestrator that understands how to delegate. What makes this collaboration transparent and reusable is how agents communicate: each agent writes structured outputs to shared project context, and subsequent agents consume those outputs as explicit, inspectable inputs. The full chain of reasoning is traceable at every step, providing the auditability enterprises require and the reusability to build on prior work across runs.

3. Context Engineering: Accuracy, Speed, and Cost

At enterprise data scales, passing raw data directly to the model does not work. Context windows are finite, and as unstructured context grows, accuracy degrades well before the window limit is reached.

Agent Studio treats the context window as a precision instrument: at each step, only the information relevant to that agent's specific task reaches the model. This artifact-driven design reduces token consumption, cutting inference cost and latency while improving accuracy. That combination is what makes long-horizon workflows tractable at enterprise scale.

4. Sandboxed Execution

What makes autonomous agents genuinely powerful is their ability to dynamically generate tools, skills, and executable code as workflows demand them, capabilities that Agent Studio supports natively. But without isolation, agent-generated code and tools executing directly against enterprise systems present unacceptable risk.

We architected Agent Studio's execution layer around isolation by default. All agent-generated code and tool execution runs in a sandboxed runtime with no access to systems outside their defined scope. Agents begin with zero permissions, and every action is policy-enforced at the infrastructure layer, not inside the agent process itself. This gives regulated industries the auditability they require, without restricting what agents can do.

Customer Story: Agentic AI Transforming Petabyte-Scale Data Analytics

Cloudera manages over 30 exabytes of structured data across its customer base, making structured data analytics where this architecture delivers immediate impact. A major media and entertainment company deployed it to give business users and analysts a natural language interface to their operational data. Their data estate spanned petabytes across dozens of databases, often with conflicting metadata and sparse documentation.

Cloudera Agent Studio orchestrated specialized agents backed by NVIDIA Nemotron running inside the customer's private network. A business user's analytical question triggered an iterative planning loop: the orchestrator explored the data estate, navigated schema ambiguity, and identified the right data sources autonomously. When the analysis required statistical computation beyond what SQL could express, the orchestrator delegated to the appropriate code execution agent. Intermediate outputs were written as artifacts and passed forward through the long-horizon workflow. All generated code executed in a sandboxed environment, maintaining a complete audit trail throughout.

Workflows that once required a data engineer, developer, and an analyst working in sequence became accessible to any business user. The agents' outputs, including SQL commands, generated code, and visualizations, were written to shared project context throughout, each inspectable and auditable. Those artifacts were also exportable as production pipelines. Because the code that agents generate is deterministic even when the underlying models are not, those pipelines are reliable and reproducible without additional engineering.

Architecture as Competitive Advantage

Every pillar in this architecture builds on the one before it. A private inference layer provides the foundation, supporting the call volumes and reliability that long-horizon workflows require. Iterative planning enables agents to navigate environments they have never seen. Multi-agent collaboration brings domain precision to multi-step reasoning. Artifact-based context management improves accuracy while reducing inference cost and latency. Sandboxed execution ensures agents operate safely within defined boundaries, with every action governed and auditable.

Cloudera and NVIDIA bring this architecture to life through Cloudera Agent Studio, Cloudera AI Inference powered by NVIDIA NIM, and the NVIDIA Nemotron family of models. Together, they deliver the foundation of building orchestration and agentic reasoning needed to run enterprise AI agents directly on enterprise data—securely, privately, and at scale.

To learn more, see Cloudera Agent Studio in action.